So, whats going on with Musicboard? | TechCrunch

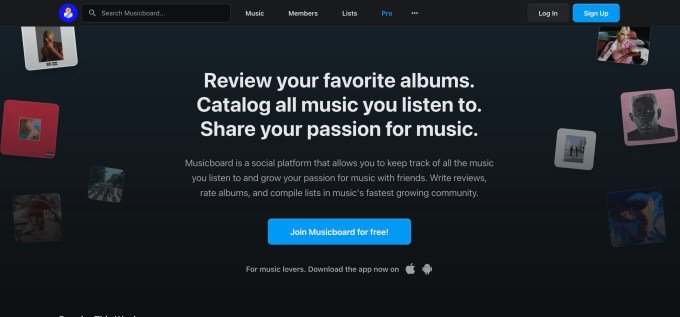

Musicboard, an app for music discovery and recommendations, has been struggling, according to its users. Over the past several months, users said the app experienced outages, the website went offline, and the Android app disappeared from the Play Store. This has concerned its devoted, if small, user base. (The app has been downloaded around 462,000…